Welcome to EVIMO Dataset Webpage!

An Event Camera Motion Segmentation Dataset

Overview

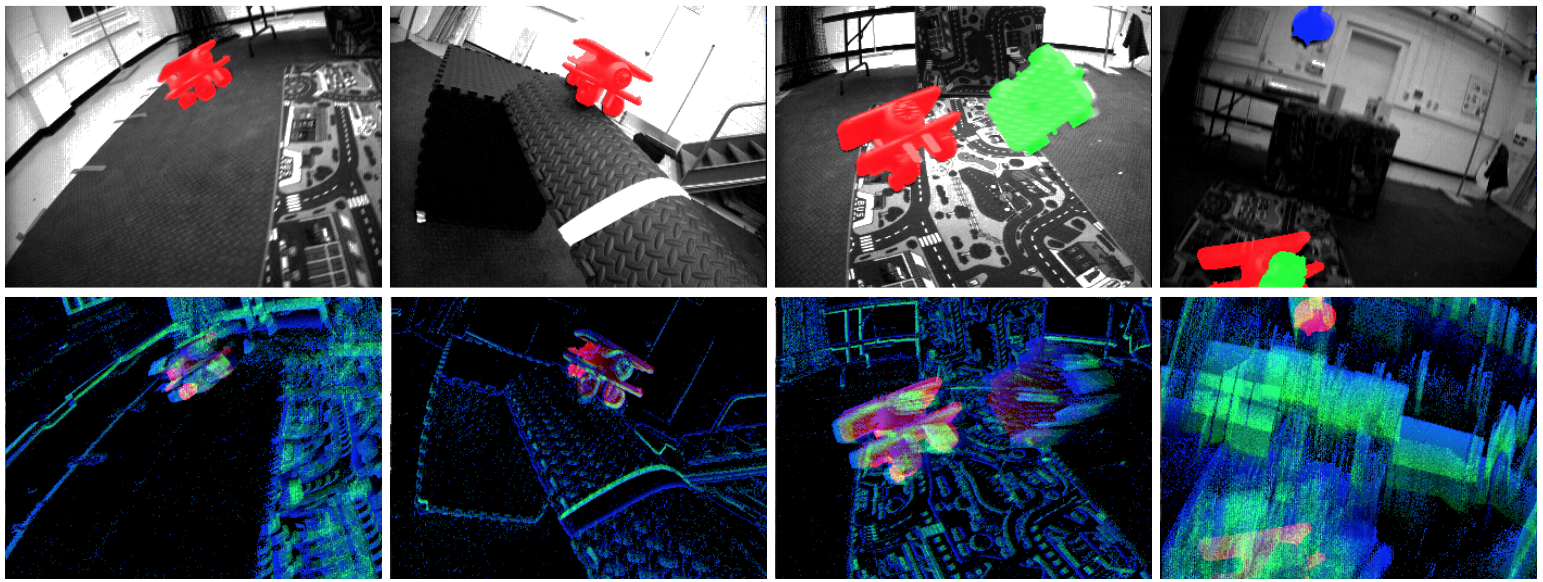

EVIMO is a collection of indoor datasets for motion segmentation and egomotion estimation gathered with a

variety of event-based sensors .

The dataset features 6 DoF poses for Camera and Independently Moving Objects updated at 200 Hz, and pixelwise object masks at 40 Hz. Due to the way the data was collected, the object masks can be re-generated at any frame rate up to 200 Hz, or higher with pose interpolation. Depth maps and classical camera frames are also available for most sequences.

There are two datasets:

1) EVIMO featuring very fast-moving objects, with the depth maps created from high-resolution scans the objects

2) EVIMO2 featuring objects on a board, all scanned at high resolution

Data Collection

Event-based sensors have extordinary temporal resolution, and are capable of working in poor light conditions where classical cameras fail. To provide the best possible ground truth, we use Vicon motion capture system to track the locations of the camera and objects. The ground truth depth and masks are then synthesized offline during data postprocessing by projecting static 3D scans of the room and the objects onto camera plane. This approach allows to capture data in extreme dark with fast moving objects, without sacrificing the quality of the dataset. Our approach for data collection is described in [1], and the authors would appreciate if you cite the paper if you use EVIMO in academic research.

Ground Truth Format

We support Python (numpy) .npz format, as well as plain text format. We also provide raw ROS .bag recordings and C++ code used to process the recordings. Please see our documentation for detailed instructions on how to read and parse the ground truth, and how to re-generate the data at higher framerates.